San Francisco-based robotics startup Physical Intelligence has recently unveiled research showcasing its latest AI model, π0.7. This model can direct robots to perform unfamiliar tasks without explicit training, a capability that has surprised even the company’s researchers. Physical Intelligence describes this development as an early but significant step toward creating a general-purpose robot brain—one that can be guided through new tasks using plain language and execute them successfully. If validated, these findings suggest that robotic AI may be nearing an inflection point similar to that experienced with large language models, where capabilities begin to compound in ways that exceed predictions based on the underlying data.

The core innovation of π0.7 lies in its compositional generalization, which allows the model to combine skills learned in different contexts to tackle problems it has never encountered before. Traditional robot training has typically relied on rote memorization, which involves collecting data on specific tasks, training specialized models, and repeating this process for each new task. However, π0.7 breaks this pattern. Sergey Levine, co-founder of Physical Intelligence and a professor at UC Berkeley, notes that once the model crosses the threshold from performing only specific tasks to remixing learned skills in new ways, “the capabilities are going up more than linearly with the amount of data.”

One of the most striking demonstrations in the research involved an air fryer that the model had essentially never encountered during training. The investigators found only two relevant episodes in the entire training dataset: one where a different robot merely pushed the air fryer closed, and another from an open-source dataset where another robot placed a plastic bottle inside the appliance based on instructions. Remarkably, the model synthesized these fragments, along with broader web-based pretraining data, to develop a functional understanding of how the air fryer operates. Ashwin Balakrishna, a research scientist at Physical Intelligence, remarked, “With zero coaching, the model made a passable attempt at using the appliance to cook a sweet potato.” When given step-by-step verbal instructions—similar to how one might explain a task to a new employee—the robot performed successfully.

This coaching capability is significant as it indicates that robots could be deployed in new environments and improved in real time without the need for additional data collection or model retraining. However, the researchers acknowledge several limitations. In at least one instance, they attributed a failure to their own team’s inability to properly prompt the model. Balakrishna explained that sometimes the failure mode lies not with the robot or the model but with their prompt engineering skills. For example, an early air fryer experiment yielded only a 5% success rate, but after refining the explanation of the task for about half an hour, the success rate jumped to 95%.

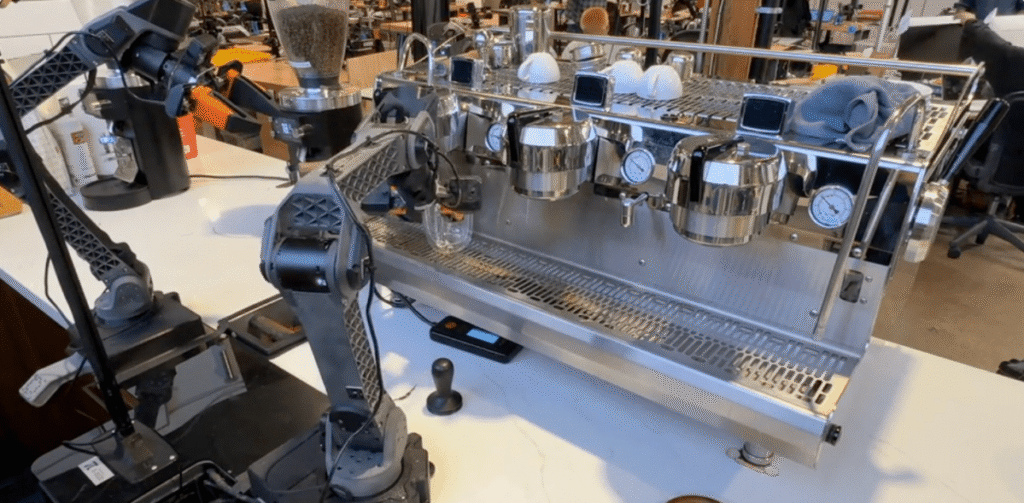

Despite these advancements, the model is not yet capable of executing complex multi-step tasks autonomously from a single high-level command. Levine stated, “You can’t tell it, ‘Hey, go make me some toast.’ But if you walk it through—’for the toaster, open this part, push that button, do this’—then it actually tends to work pretty well.” The team also pointed out that standardized benchmarks for robotics do not currently exist, making external validation of their claims challenging. Instead, the company compared π0.7 against its previous specialist models—purpose-built systems trained on individual tasks—and found that the generalist model matched their performance across a range of complex activities, including making coffee, folding laundry, and assembling boxes.

What stands out most about this research is not any single demonstration but the extent to which the results surprised the researchers themselves—individuals who are well-acquainted with the training data and what the model should be able to accomplish. Balakrishna expressed, “My experience has always been that when I deeply know what’s in the data, I can kind of just guess what the model will be able to do. I’m rarely surprised. But the last few months have been the first time where I’m genuinely surprised.” The paper employs careful hedging language throughout, describing π0.7 as showing “early signs” of generalization and “initial demonstrations” of new capabilities. It is important to note that these are research results, not a deployed product, and Physical Intelligence has been cautious from the outset regarding commercial timelines.

To date, Physical Intelligence has raised over $1 billion and was recently valued at $5.6 billion. The company is reportedly in discussions for a new funding round that could nearly double its valuation to $11 billion.

Comments are closed for this story.